Sex Workers Versus the Algorithm

No seriously, fuck the algorithm.

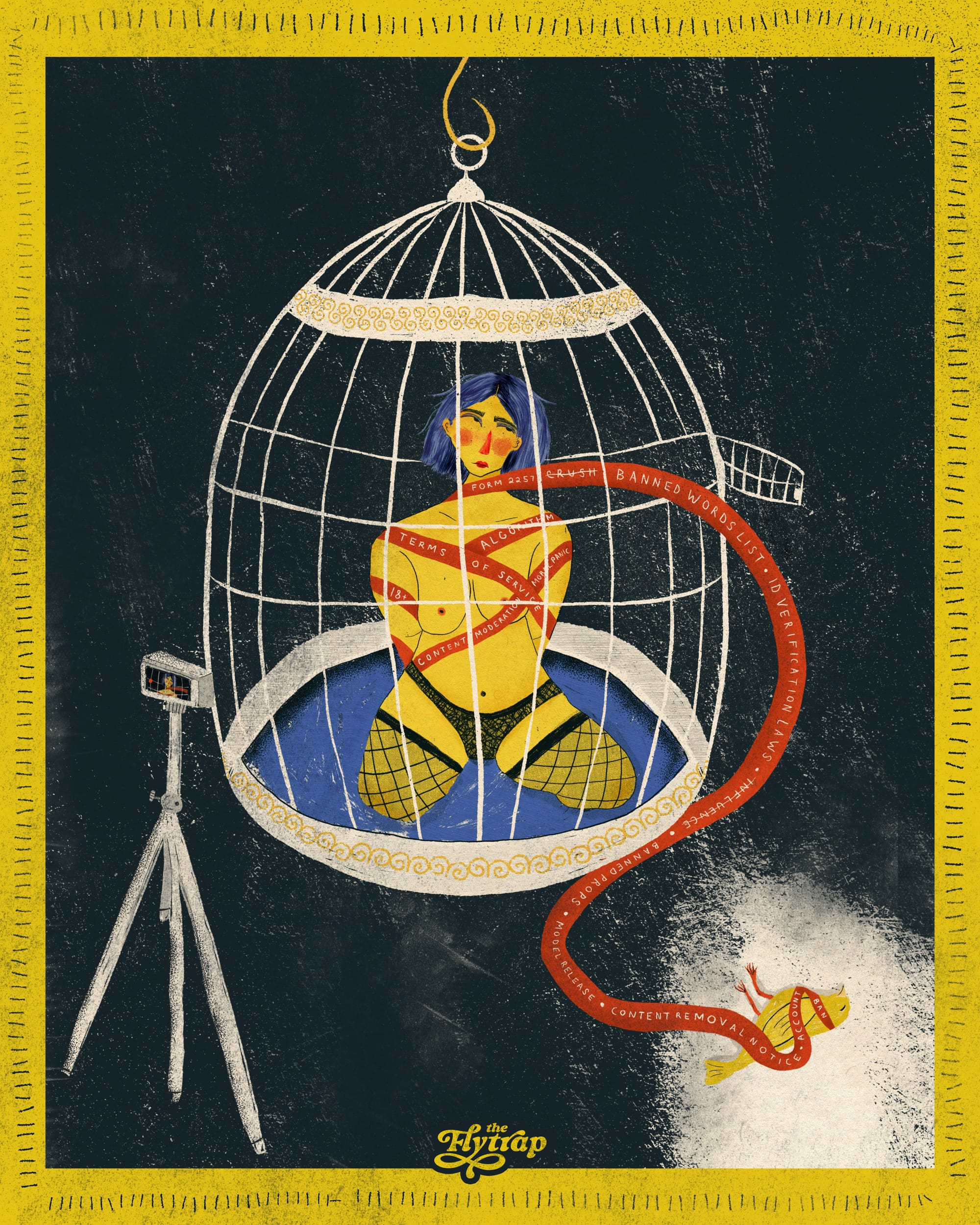

“I did not get into porn to format .pdfs,” says Lauren Kiley, a sex worker, long-time Flytrap patron, and the person first on my Rolodex when I want to talk to adult performers about their work. I had some questions for her that have been on the minds of a lot of people visiting adult websites: Why is the front page suddenly wall-to-wall stepmoms and stepsisters, why do searches for relatively vanilla content turn up no results, and how are so many people getting stuck in ovens?

The short answer is: payment processors.

The longer answer, though, is a collision of our society’s deep-seated loathing of sex workers, right-wing pearl clutching, capitalism, and the looming power of the tech industry over every aspect of our lives. These are issues sex workers and organizations such as Call Off Your Old Tired Ethics, Hooker Army, and the Sex Worker Outreach Project have been fighting for a very long time, and they are supercharged in this political moment—one they’ve been warning would come.

One could think of the front page of Pornhub as the landing site of a larger cultural crisis. Anti-porn activists want you to think this is a battle to protect women from exploitation and the soul of America, but sex workers know that the real battle is their fight for autonomy, respect, and safe working conditions.

In 2020, Mastercard and Visa announced they would stop processing payments for Pornhub, thanks to pressure from a sustained campaign started by anti-porn activist Laila Mickelwait. Her “Traffickinghub” campaign was quickly supercharged by Exodus Cry, an extremist Christian organization determined to abolish sex work. Exodus Cry, founded in 2008 by Benjamin Nolot, builds on the work of organizations like Jerry Falwell’s Moral Majority, Focus on the Family, and their ilk—and has the audacity to cite noted abolitionist William Wilberforce as an inspiration for its work.

The “Traffickinghub” campaign claimed Pornhub was hosting child sexual assault material (CSAM), nonconsensual content, and videos associated with human trafficking. If Exodus Cry’s concern is Pornhub hosting CSAM content—something sex workers also think shouldn’t exist—then Facebook may be a bigger worry. Of the 21.7 million instances of CSAM reported to the National Center for Missing and Exploited Children in 2020, 94% were on Meta platforms. Meanwhile, Grok is barfing up non-consensual AI porn on command for users; the Apple and Android stores host scores of “nudify” apps; and 4chan and Reddit do their own fair share of nonconsensual content sharing. It sure seems like the problem with sexual exploitation is not Pornhub but rather a culture that loves profits and hates women.

Exodus Cry’s campaign came right out of the anti-sex work playbook that conservatives have been running for centuries, ginning up fears about exploitation and abuse and conjuring up images of helpless women trapped in inherently degrading, demeaning situations without agency or autonomy. The rescue industry argues that these women need a savior, something we’re reminded of every year during the annual Super Bowl Sex Trafficking Discourse, when repeated breathless headlines reprint law enforcement claims that a horde of pimps are descending upon the host city with vans full of trafficked women and girls. This necessitates, obviously, an aggressive crackdown on sex workers—"for their own good."

For the anti-sex work crowd, which includes not just Christian conservatives but also sex work-exclusionary radical feminists (SWERFs), all sex work is inherently evil. Sometimes it comes from deep inside the house, as in the case of the former sex worker who founded adult content platform ManyVids and did an about-face in January. She referred to the industry as “exploitative,” announcing that her goal was to “transition one million people out of the adult industry.” One might be forgiven for thinking the move smelled a lot like pulling the ladder up after her. The situation was made even more bizarre by a series of weird posts from the platform’s official social media accounts, suggesting that at least some ManyVids employees are also deep in the grips of some sort of AI-induced crisis.

The ultimate goal of the right is a total ban on pornography, but the anti-porn contingent knows the best way to get there is through slow creep, starting with those working on the fringe doing fetish work, consistently moving the goalposts on what “fetish” means, and nibbling away until there’s nothing left. It's right out of the rightwing playbook on trans rights, access to abortion, and freedom of speech. During the 2020 “Traffickinghub” campaign, anti-porn activists leveraged the marginalized status of sex work to inflict reputational harm on credit card companies, who are more than happy to take as much of your money as possible while also not wanting to get mired in culture wars.

For payment processors, the price of doing business in the face of scaremongering headlines, activist stunts, letter-writing campaigns, and harassment simply wasn’t worth the hassle.

Don't miss the latest Cancel Me, Daddy, a Flytrap Media production...

Check, Please

The Exodus Cry campaign had precisely the desired effect, contributing to the immense financial squeeze both online and in-person sex workers were already experiencing. Banks were historically nervous about providing services to sex workers: A 2021 report by the Center for LGBTQ Economic Advancement & Research cited data from the Sex Workers Outreach Project (SWOP) that found that 45% of sex workers reported their bank accounts had been frozen or closed at some point during their careers. In some cases, they never got their money back.

The inability to access banking in a society that assumes everyone has a bank account and a credit score pushes sex workers even further to the margins, a turn of events that anti-porn crusaders view as a positive consequence that will push people out of the profession. Whether sex workers are operating legally or not, commercial banks view them as risky and undesirable clients, and the increased scrutiny makes these banks reluctant to accept funds associated with online sex work.

Visa and Mastercard's 2020 announcement rolled out in a herky-jerky, confusing way, as Samantha Cole reported for VICE. But it still had a seismic effect on adult content creators who considered Pornhub an important source of revenue. Despite the best efforts of bitcoin boosters, the vast majority of people who pay for adult content want to do so via credit card—and sex workers do not necessarily want to be paid in cryptocurrency, either. If credit card companies and the processors who run payments refuse to conduct transactions, people do not get paid for their work, but the platform can still run ads alongside their content.

The fact that sites can continue earning revenue with ads alongside content performers do not make money from is “something people really missed about tube sites,” Kiley said. On sites like Pornhub, “performers are barely making any money for streaming videos compared to what the platform earns on their ad revenue. They have always been making money off all this. Pornhub has done some evil shit! The foundation of their business model is stealing from sex workers and stealing their money.”

Often, those clips are stolen and posted without consent, which is a personal and economic violation for the performers. When performers are posting their own content, they have to hope viewers will be enticed enough by the free clip to go off-platform to see more. For tube sites with creator programs, the return on investment can be highly variable for all but the biggest creators.

Online sex workers who want to be compensated for their labor rather than simply generating advertising revenue for someone else may sell video and photo on demand; do livestreams and cam sessions; produce customs on sites like OnlyFans; or do phone sex work. No matter where they work, the Visa/Mastercard decision caused a sea change in how adult websites craft, and enforce, their content standards and terms of service.

With the Mastercard and Visa announcement, adult websites saw the writing on the wall and began engaging in a crackdown on content to stay in the good graces of payment processors. Fearing that processors under pressure from anti-porn activists would cut ties over explicit content, moderators set off a cat and mouse game for the content creators who are simply trying to make a living in an extremely precarious industry. This is censorship by payment processor, thanks to the fact that these two companies have a stranglehold on the financial services sector: If Mastercard says facesitting is out, adult content sites are going to make sure they’ve scrubbed their servers of facesitting.

“Visa and Mastercard do not have a legible set of standard rules for content moderation,” Kiley says. “They have one, but it doesn’t really make sense, so every single platform makes up their own terms of service based on what they think they can sell or get away with.” Those sites, in turn, provide indifferent, conflicting, and sometimes mystifying guidance about what they will and won’t allow, forcing content creators to guess at what kind of content might result in strikes, takedowns, demonetization, and bans, lest they make daddy—sorry, step-daddy—angry.

The Naughty List

That’s how we got to the proliferation of step-family content. Incest is extremely low-hanging fruit for content moderation: In many regions of the world, incest is illegal; it often includes implications that people are below the age of consent, which is definitely illegal; and it’s extremely taboo. Adult performers are actors who understand the difference between a staged scene and reality, but payment processors apparently cannot grasp this simple distinction. Consequently, content creators add a “step” to the mix to get around this issue, and when step-sis gets stuck in an oven or window, it’s predicament bondage by another name.

But this is just the tip of the iceberg, Kiley explains, because payment processors are constantly changing their standards and, consequently, so are the sites that want to keep making money off the adult content creators who use them to disseminate their work. This was an issue long before the Traffickinghub campaign: In 2019, adult performer Sophie Ladder started what Kiley affectionately calls “the spreadsheet,” a crowdsourced document shared amongst adult content creators that keeps track of which sites allow what kind of content.

The sprawling document includes a dizzying number of content restrictions—from fisting to age play to consuming drugs or alcohol—for clip sites, live streaming, fan sites, tube sites, video on demand, social media, and specialty sites. In a nod to the uneven consequences of these confusing and unspoken standards for performers, Ladder notes on the spreadsheet that “a site’s rules don’t necessarily correlate to their enforcement.”

The spreadsheet attempts to unpack the sometimes wildly inconsistent and bizarre rules of these sites, noting, for example, that one site allows solo—not paired—diaper content. “Pee,” but not “piss.” Prop weapons may be allowed, but in practice, videos featuring props may be banned. Hypnosis may be disallowed, but sometimes it’s possible to get around that by using different terminology. Restraint may be permissible in some scenarios—two limbs restrained, say—but not in others. Animals are not allowed onscreen—leading to content strikes for the occasional curious cat in the background, but also sometimes for stuffed animals or simply shapes that machine learning decides must be animals.

This is not algorithmic softening. It is algorithmic violence.

“They seem to be inconsistent on this” and “¯\_(°_o)_/¯” are frequent annotations on the spreadsheet, reflecting the frustrating ambiguities Ladder navigates as she tries to keep the references current. Content moderators are not painstakingly reviewing video titles and copy: They are relying on algorithms to do that work for them, and when an algorithm dings a content creator, it doesn’t say why. Hence the spreadsheet, which reverse-engineers content flags, identifying words used in titles or copy or things said on-screen that may result in a takedown.

The spreadsheet has become “more and more red over the years,” Ladder told me, reflecting the growing number of content restrictions. She documented nearly 200 words—a rapidly-growing list that she noted was out of date when we spoke in January 2026—that can trigger flags tied to content bans.

Some words caught in the trap of the algorithm ostensibly target specific sex acts: “Golden shower,” “lactation,” and “pegging.” Others zero in on cues around forbidden content—many sites do not allow for substance use on the grounds that impairment makes it impossible to consent, so they ban references to alcohol and drugs, such as “champagne.” Other words that can trigger a strike include “bite,” “cervix,” and “cycle.” A ban on “rapist” also means you can’t refer to the word “therapist.”

“Word bans are just ridiculous,” Ladder says. “Because a human is not reading through your video description and interpreting it as a human would. It’s a computer recognizing words. I just saw someone unable to post a video with the word 'Saturday' because the word 'turd' is in there.”

The computers tasked with moderating these sites are blissfully unaware of the fact that words can mean more than one thing. “Crush” and “squish,” for example, are blocked to address crush videos, but this also means, as Kiley notes, that you can’t title a video “I Have a Crush On You,” nor can you "squish" your face between a "giantess’s" boobs. If “smoking” is banned, then a “Smoking Hot Secretary” is banned too. A sleepy girlfriend can’t read to you at bedtime when references to sleep are disallowed due to concerns about nonconsensual content involving unconscious people.

As I was writing, Kiley DMed me about yet another account strike: “Platform just flagged a video about impregnation fantasy because I say something like ‘put a baby in me!’”

Kiley explained that the endless dance around content bans requires constantly coming up with new ways to craft video titles and content that are frustrating not only for adult performers, but also their customers: If you’re someone who wants to watch hypnosis videos, for example, you’re probably going to search for “hypnosis,” not “influence,” as some sites force Kiley to describe such content.

“How are customers supposed to find things?” Ladder asks. “The word ‘forced’ used to be used in femdom videos. Some are solo videos, like ‘get drunk for me,’ ‘drink more,’ a femdom telling a man to suck dick. There’s no actual forcing happening. But then ‘forced’ started getting banned, so ‘coerced’ started getting used, but then sites caught on and banned that word. People call it ‘encouraged’ now.”

These bans reify censorship by dictating what kind of content people can post, and how they can talk about it. This is not algorithmic softening. It is algorithmic violence.

Sex Work is (Admin) Work

This site-by-site labyrinth requires tremendous work to navigate. Most content creators diversify the content they post on each platform so they have more than one source of revenue. In part, this is designed to stay ahead of site closures, content strikes, account bans, and other issues. No one wants to put all their eggs in one basket, as non-sex workers learn each time a social media site crashes and burns and they lose a slew of relationships as a result. It may also reflect different facets and interests of a performer’s work. Performers also use cross-platform promotion to build awareness so customers know to seek their work out elsewhere, too, especially in instances where content restrictions mean that followers will need to find them on other sites to see all of their work.

Posting across multiple platforms a day is time-consuming, and wading through the mysteries of the algorithm makes it harder. Content creators have to painstakingly hand-craft advertising copy and think carefully about video edits, or come up with generic content designed to be as inoffensive as possible. Most are publishing a large volume of content to keep up with customer demand. Some, like Kiley, aren’t just content creators: They also act as administrators for other content creators, handling hundreds of videos every month.

Even when it’s content a performer loves making, whether solo or with collaborators, it may not be worth it if their posting options are limited. These constraints put obvious bounds on the economic freedoms of adult content creators—and they also limit people artistically.

“It’s more often instead of stuff getting taken down,” Ladder says, that “we’re not able to post it in the first place. We’re having to really self-censor. We’re making different content than we used to based on the rules. Whether I can upload a scene on what sites might allow it is in my mind when deciding what to do.”

Every single site doesn’t just have different rules about content and the words used to describe it; they also have their own policies about the supporting documentation content creators are required to upload. Content creators must meet strict legal requirements—themselves also the product of aggressive right-wing pressure—for example, to provide copies of their IDs, model releases, and documentation known as 2257 compliance forms attesting to the fact that all performers are of age, consenting, and agree to the distribution of their content. For every single video.

This is where Kiley’s infamous .pdfs come in: She has to painstakingly compile this documentation in the format preferred by a given site, including potentially providing retroactive documentation for content posted before a policy change. For older content, this can require going into paper records and scanning them. If the documentation deemed acceptable at the time no longer meets requirements, the offending videos may be removed; for example, if paperwork doesn’t include “downloading” on the list of potential uses of content that a performer consents to, or the ID on file was current at the time of filming but has since expired, a platform may determine that a video violates its terms of service. For performers who count on a robust back catalogue to attract and retain customers, this can be a significant earnings hit.

It should perhaps not come as a surprise that while sites demand sensitive information such as performers' legal names and home addresses, they do not exercise care with that material. Careless data storage puts performers in jeopardy: Kiley told me one performer contacted a platform asking for her tax information and received “an entire folder of tax information for other people.” These websites aren't the only actors in the adult industry who leave performers vulnerable: Adult Industry Medical Healthcare Foundation, which performed STI testing for performers, closed in 2011 after a major data breach affected around 12,000 people.

Do you love The Flytrap's art from amazing freelance artists and Art Director rommy torrico? You can find posters, tees, and more at our merch store! This week's art is from our incredible Art Fellow, Melissa Em; get your poster today.

You might particularly enjoy rommy's "Decrim Now" illustration, which accompanied Evette's "If We Valued Sex Workers, the Outcome of the Diddy Trial Would Have Been Different."

Pushed to the Margins

The new policies and restrictions are allegedly supposed to protect sex workers from the evils of the world, but the reality is these practices squeeze people out of the profession. By making sex work so untenable, these policies are actually putting sex workers in extreme danger. When sex workers are socially and economically marginalized, their work is stigmatized, and they are treated as if they're disposable, they are forced into perilous situations simply because society has chosen to prioritize the rabid ideology of a handful of truly awful people over the humanity of sex workers.

Making it harder for sex workers to work “makes the bad numbers go up,” says Ladder, referring to statistics on CSAM, human trafficking, and child abuse. Ladder, like Kiley, like most reasonable people, is also opposed to human trafficking, nonconsensual content, and CSAM. Ladder and Kiley also both mentioned the extreme trauma reported among content moderators working for sites like Facebook, who plow through horrific, scarring material all day long. They see the worst parts of humanity. Explicit and sometimes unconscionable content is not a problem limited to adult platforms—and outlawing porn will not keep people safe online.

Making it harder for consenting adults to make and watch porn doesn’t eliminate the graphic violence that very real humans have to review behind the scenes of social media sites. It doesn’t resolve the toxic rot at the heart of the misogynist rape culture all around us. It doesn’t spare children from sexual abuse. It doesn’t empower women, provide them with economic opportunity, or make society safer, happier, and more pleasant.

A career at the margins is sometimes the only one people are able to pursue, whether it’s disabled people doing online sex work because other job opportunities are closed to them or Black and Latina trans women doing survival sex work. Keeping sex workers on the economic margins turns them into easy targets for abuse, and easy victims to blame. It leads to the doxxing and harassment of online sex workers. It inspired a racist misogynist to kill eight people at several Atlanta spas in 2021, targeting Asian Americans in sexualized workplaces often derisively known as “rub and tugs.” It drove 38-year-old Yang Song to her death when she tried to flee out a window during a vice sting in New York in 2017 and sparked the formation of Red Canary Song, a transnational sex worker-led mutual aid organization. It means that law enforcement can sexually and physically abuse sex workers with minimal consequences.

Ironically, trying to force people out of sex work creates the conditions that make it harder not just to find safe work, but also to leave the industry, for those who want to do so. One phone sex operator I spoke to commented that if she wanted or needed to leave the industry, “I don’t know where that puts me in terms of applying for other jobs in the future. What do you put on your resume?” This theme comes up over and over for adult performers, who deserve access to safe working conditions and career mobility, two things that are incredibly hard to access when your work is so stigmatized.

People often refer to sex workers as “canaries in the coal mine” when it comes to online content restrictions and bans, a subject of increasing interest as multiple states move on ID requirements and other restrictions designed to limit access to adult content. People are beginning to wake up to the fact that these efforts aren't just an attempt to abolish sex work, but about to impose a limited, hateful, right-wing Christian morality on everyone.

But, Kiley notes, people seem to forget that in this scenario, the canary dies.

This piece was edited by Evette Dionne and copyedited by Chrissy Stroop.